Lorenzo Piersante

I’m pretty sure that anyone, from time to time, has come across headlines such as “scientists predict that … (bunch of numbers)”, “economists expect that … (bunch of percentages + names of a country)”, “the weather tomorrow will be … (mild and sunny, we hope)”, but have you ever stopped to wonder – how exactly do they do that?

For example, when it comes to weather forecasts or climate change, we don’t expect that a lonely scientist in a dusty dark library with pen and paper will be able to do much. After all, we are talking about studying the motion of air masses moving across the entire globe!

Generally, they say that a computer simulation of a model (or several models) is run and some results are obtained. This series of articles will provide an overview of the ways computers are used to perform mathematical operations. We will begin with the very basics, and work our way up to some of the most modern approaches to physical model simulation. Let’s begin!

The first step of a computing machine

Machines that could work independently when provided with energy and instructions first appeared some 300 years ago. A lot earlier than you thought, isn’t it? Maybe not quite as far back as the invention of the wheel, but still quite a long time ago given the much higher degree of complexity involved.

These machines were called automatic looms and were introduced to the French textile industry by a man named Basile Bouchon in 1725. These looms could be controlled via a paper tape that presented a set of punched holes. A mechanism read the positions of the holes on the tape, and set in motion the loom accordingly. In this way the embroidery of geometrical patterns on a fabric could be automated.

This primitive machine (from a modern day perspective) possessed all the essential elements of a computer: instructions are loaded in some language, the machine reads them, it performs some operations, it prints the results. A computer does just the same, with the sole difference that the instructions and results are numbers.

Now, the obvious question is: what language does the computer speak? As you probably know, a computer uses binary numbers, that is a number system that, unlike the decimal system, is based on powers of 2, not powers of 10. Just as we have the digits from 0 to 9 in base 10, in binary the only available digits are 0 and 1. The illustration below shows how to go from a decimal number to a binary number, and vice versa.

NM is read as number N in base M, where M indicates the power on which the number system is based.

As you can see from the right hand side of the picture, the digit at each location corresponds to a specific power of 2. So, 1 indicates a power of 2 that is present in the number, 0 a power that is not present. You then sum of all the powers of 2 that make up the number to give its in decimal form.

The left hand side of the picture shows how to convert a decimal number to a binary number. Following the example, it works as follows:

57/32 = 1 remainder 25

25/16 = 1 remainder 9

9/8 = 1 remainder 1

1/4 = 0 remainder 1

1/2 = 0 remainder 1

1/1 = 1 remainder 0

Hence: 572 = 111001

In words: we first identify the largest power of 2 contained in the number, then execute repeated divisions of the reminders by the smaller powers until 20 (= 1) is reached. Finally, the digits of the binary number are given by the quotients (the numbers that divide successfully). Note that the initial picture showed a short-hand method to calculate the binary number, you only have to pay attention to the order of the digits!

Now that we have binary numbers, we want to perform some operations with them. The basic operations that a computer must be able to do are addition and multiplication. The diagrams below show the way they are executed. Notice the similarity with their decimal counterparts.

The addition table shows how two digits are added, where you have to remember that: 1 + 1 = 10 with 1 borrowed by the next digit in the number.

When performing a multiplication, we multiply the first factor (10111 in the example) by each digit of the second factor (110), remembering that each digit corresponds to a different power of 2. This means that the preliminary products (3 in the example) will have an extra number of 0s to the right equal to the exponent of the corresponding power of 2. This is analogous to multiplying a number by powers of 10 in decimals. For example 7*1 = 7 (add no zeros), 7*10 = 70 (add 1 zero), 7*100 = 700 (add 2 zeros) etc. The final step in the operation consists of adding up the preliminary products to obtain the final result.

We now have a grasp of the number system in which a computer operates. However, you might wonder why computers don’t simply use decimals like humans do. The reason lies in the hardware.

A computer works thanks to a main electrical component: the transistor. This device acts as a sort of voltage controlled switch, so that it has only TWO stable states: ON and OFF. Therefore, the natural choice for a computer for storing and processing information is:

ON = 1 and OFF = 0.

There were early attempts to make computers that worked with decimals, however, these machines turned out to be uneconomical in comparison to our beloved binary companions that persist today.

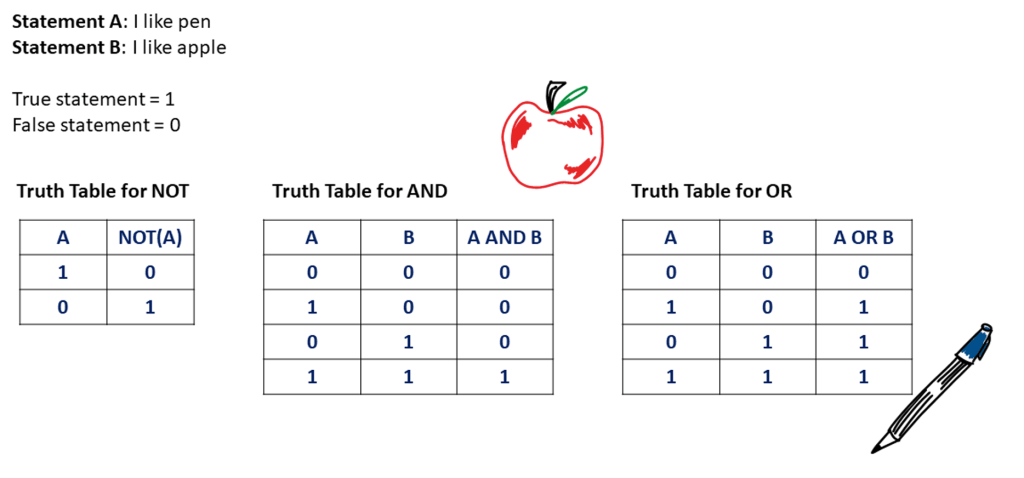

Using the binary number system is useful also because of its close connection to Boolean algebra and logic. This is a branch of Mathematics that studies the logical relationships between propositions, and how they change or combine due to the action of logical operators such as: NOT, AND, OR etc.

The link exists because, relatively to a statement, True can be represented by 1, and False by 0. The next illustration shows how two sample propositions are acted upon or combined via the above logical operators. The results are given in the form of a so-called truth table. This seemingly marginal detail is of supreme importance because it is relatively easy to implement logical operators in electronic circuits by using combinations of transistors called logic gates.

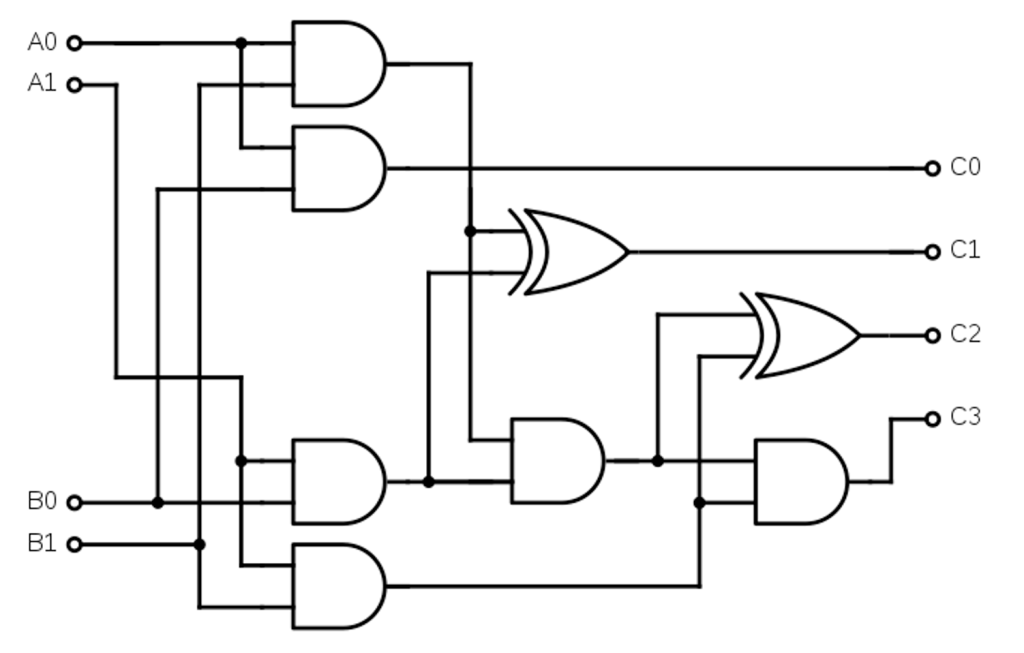

In fact, truth tables and logical expressions in the language of Boolean algebra find a direct translation in actual circuits as shown in the examples below.

In the diagrams above, the black lines represent wires, while the shapes where wires enter and exit are logic gates. The various shapes correspond to different kinds of logic gates. They react in different ways to the input signals (0 = no voltage, 1 = voltage), producing different outputs for the same input. The gates used in the digital adder are called NAND gates (AND followed by NOT). Whilst the gates used in the digital multiplier are AND (the round one) and XOR (exclusive OR, the pointy one).

These combinations of gates react to input voltages in such a way that they reproduce the truth tables that were given for addition and multiplication.

For example, the digital adder is built so that when the inputs are (0, 0), (1, 0), (0, 1) the C output remains OFF (0), while the S output reacts since the result is a single-digit number. On the other hand, when the input is (1, 1), the C output is ON (1), and the S output is OFF (0). The result is 10, that is a two-digit number.

For the sake of simplicity, I will not delve into the explanation of the digital multiplier. Nonetheless, the interested reader can find lots of material about the binary number system, Boolean algebra and logic circuits at this link.

The concepts that have been introduced up to this point constitute the essential instruments in the toolkit of any computer: binary arithmetic and Boolean algebra. Building on this foundation, in the next article of this series, we will demonstrate how a computer can deal with complicated tasks working only with these simple operations – see you there!

Dear

Cool

获取 Outlook for iOShttps://aka.ms/o0ukef

LikeLike

[…] the previous article we talked about the ways computers can handle basic mathematical operations. This is the result of […]

LikeLike

[…] started by developing a basic understanding of the inner workings of the computing machine, we then delved into the subject of numerical methods, and finally we explored some of most recent […]

LikeLike