Khanh Giang

As previously promised, in this last part of the series we will explore the interesting world of quantum measurement. With the need for quantum computers rising and the underlying logic of it seemingly understood, why don’t we have them already? Well, there are some potential limitations, as we shall see…

Measurement

Perhaps one of the most intriguing aspects of quantum computing is measurement. Of course to operate a quantum computer only needs to know whether a qubit is ‘on’ or ‘off’, rather than the exact state; and as such many of the problems related to measurement can be avoided. Nonetheless, these problems are still very interesting to look at, and perhaps when quantum computers become more readily available, they might become real concerns.

Qubits (or anything from the quantum realm) are extremely small, so even the smallest perturbation or vibration can cause them to behave chaotically. Not to mention that in a large system with many qubits, such as a quantum computer, there are bound to be a few errors in the state of the qubits. Since we want to find out the result of various calculations, measuring is an important – and required – step.

We almost immediately encounter our first problem: qubits exist in a middle ground: a(0) + b(1), but the measurement can only return 0 or 1. It’s the same as we saw in the first article with the Schrödinger’s Cat experiment, before the box is opened, the cat is in a ‘superposition’ of being both dead and alive with some probability. Once the box is opened, the cat is either 100% alive, or 100% dead.

One potential workaround might be to just make repeated measurements and gain statistical information. However, this unfortunately doesn’t work, because the first measurement doesn’t leave the state unaffected. Once the box is opened and we confirm the cat is dead, no amount of closing the box and reopening (i.e. measurement) can save our hypothetical cat.

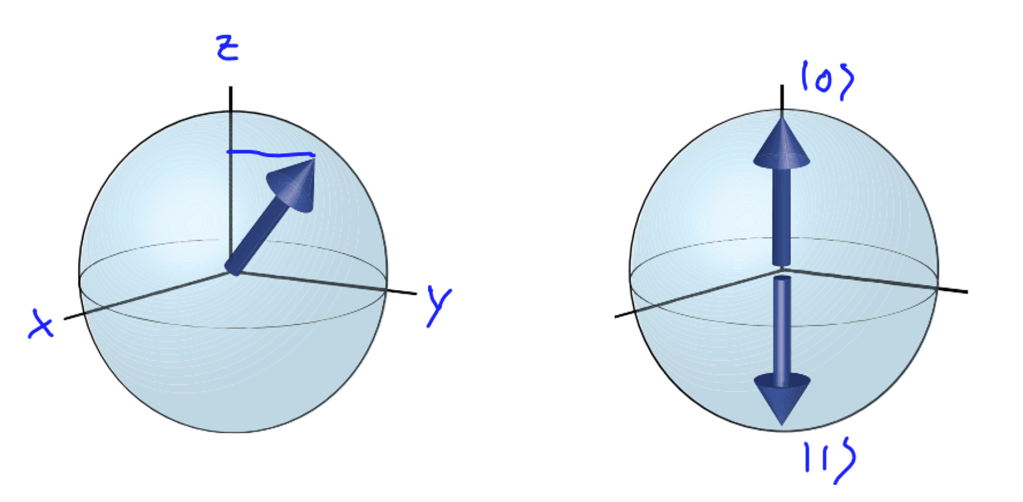

The next problem is closely related to the first: if the state is unknown, we can’t optimise the measurement. To measure a qubit, we first need to choose a suitable basis. A qubit has a lot of information, similar to how a human being has height, weight, blood type etc. and choosing a basis is like choosing a ruler or a scale. But choosing the basis isn’t easy.

Imagine a sphere of radius 1 (called a Bloch sphere) with (0) and (1) on the poles. Since the total probability of the two states must add up to 1, every combination of (0) and (1) is on the sphere surface. If we choose to use the z axis as our “ruler”, then we still get (0) a times, and (1) b times. But if we choose the x axis as our ruler, then no matter what state our qubit is in, the measurement will always be zero which is completely useless for us!

Fortunately, this problem solves itself. If we know in advance the qubit we put in (which we do since we prepared them, and usually they are either in state |0> or |1>) then we know after the logic gates and manipulation, which basis it should be in. (For a more in-depth discussion of logic gates head back to the second article).

Limitations

Quantum computers seem to offer solutions to many problems, so why are they not widely produced and used in 2023? Physicist David DiVincenzo has listed a few requirements for a practical quantum computer (of course, a functioning prototype, not commercial quantum computer doesn’t have to obey all of these requirements):

- Universal gate set

- Physically scalable to increase the number of qubits

- Qubits that can be initialised to arbitrary values

- Qubits that can be read easily

- Quantum gates that are faster than decoherence time

Let’s look at each of the requirements in turn starting with the second as we have already looked at universal gates in the previous article.

The second requirement to have a scalable system is a rather tricky one. Remember that our qubits are all in a superposition, are vulnerable to the slightest perturbation in heat, humidity, and electromagnetism (light, radio-waves, wifi etc.), and prone to making mistakes. To control such a multi-qubit system, we need to generate and coordinate almost perfectly-timed electrical signals. Scaling these systems to support a large number of qubits is difficult. Not to mention that as the system grows, there will be more “correcting” qubits, existing to check whether any of the other qubits are faulty, and so it would appear that the memory of a quantum computer may not grow quite as quickly as predicted.

The third and fourth problems of initialisation and readout are mentioned in the measurement section above, and a great deal of research is being conducted on these topics, with frequent progress. However, the condition under which these protocols (and in general, quantum computers) work are very extreme: requiring superconducting material and a closed system at 30 mili-Kelvin (approximately 0K which is -273.3 degrees C).

But by far, the biggest obstacle which has to be overcome is quantum decoherence time. We have seen on numerous occasions in this series that superposition is the reason why quantum computers are superior to classical computers, but this relies on an important characteristic called coherence. Coherence is the ability of a quantum state to remain in superposition and allow for interference.

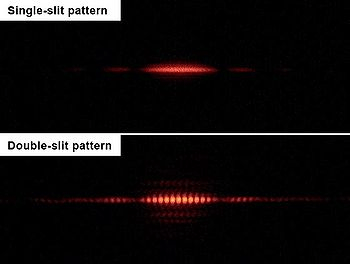

The Young double slit experiment is a classic demonstration of coherence. In physics, two wave sources are coherent if their frequency and waveform are identical.

Shining light through one slit, we see a single blurred-out blob, just as we would expect if we were to fire something macroscopic like a bullet. Shining light through both slits, we get an interesting banded pattern, and not the 2 blurry blobs we might expect. This is the result of constructive interference creating bright spots, and destructive interference making dark spots. For these interference patterns to form, the light has to be coherent (which in this case means it comes from one source).

However, coherence isn’t permanent, unless we are in a perfectly isolated system. Every action we perform on the system of qubits will share coherence with the environment which is lost over time, just like energy is lost due to friction in classical mechanics.

It is hoped that if the error rate remains small enough, it might be possible to use quantum error correction to suppress errors and decoherence. However, this in turn leads to the problems of scalability which we’ve just been discussing: the larger the computer, the more correcting bits required. This still remains a huge problem to be dealt with.

Applications

Despite the current limitations, quantum computing already has many applications, mainly in solving currently solvable problems that are deemed unsolvable by classical computers because of the time taken. The most notable example is in cryptography and solving integer factorisation problems.

The premise of this problem is quite simple. Let’s try to calculate 73 * 97 by hand. It would take a little while, but you will eventually find the answer 7,081. But, can you factorise (break down into prime numbers multiplied together) a smaller number like 1343 by hand? The first step is to perhaps realise that one of the primes must be smaller than the square root of 1343 (because if both/all primes are larger than the square-root, the number will be bigger than 1343!). It’s again a little tricky to calculate the square root by hand, but an estimate is good enough: somewhere in the middle of 30 (302 is 900) and 40 (402 is 1600).

So, what are the primes smaller than 40? 2, 3, 5, 7, 11, 13, 17, 19, 23, 29, 31, 37. The factorisation of 1343 can’t have 2 and 5 because the last digit of it is 3 (so clearly not even, or ending with a 0 or 5), and the sum of 1 + 3 + 4 + 3 = 11 isn’t divisible by 3, so therefore it doesn’t have 3 in it either. (For more divisibility tricks see this article by previous TRM intern Alex Nikic).

The divisibility rules for 7 and 11 are complicated enough that for this number it’s better to just divide by hand. Then we also have to try 13, then 17… A-ha! 1343/17 is 79, which is another prime, so we have found the factorisation 1343 = 17 * 79. As we’ve seen, this process is much, much more complicated and takes more time than the multiplication.

The same goes for computers. For a classical computer, division takes 6 times longer than multiplication. And for numbers that are several dozens of digits long, the process of trial and error will take so long that it isn’t worth it to even try. This is in fact one of the main reasons why integer factorisation is widely used in cryptography.

The method works by multiplying two very long prime numbers A and B, and using their result as the public key. Each of the two people involved in sending the encrypted information hold one of the private keys, A or B, which is never revealed. They send the message with the public key to the other party, and if the other party can factorise it, they can read the message. If they have the other key, this should be easy – although it takes longer than multiplications, computers still handle divisions very well.

But if there’s a third party wanting to break into the conversation, they would need to factorise the large public key. There isn’t a smart trick to do this – the computer will have to try again and again, going through all the prime numbers as it tries to find the correct factorisation. (Of course more modern methods involve more numbers and arithmetic operations eg. exponentiation, modulus or taking remainder etc.).

Classically, it takes far too long to crack – hundreds of years, thousands of years or even more. But with quantum computers, where the processing rate is exponentially faster, there is a high chance they might be able to crack these factorisation problems in a reasonable amount of time. In 2016, scientists at MIT were able to reduce the number of qubits required to factorise the number 15 from twelve down to five qubits. Of course 15 is a small number which is easily factorised, but the hope os that as quantum computing grows, we will be able to factorise much bigger numbers.

However, at this stage, you do not need to worry about your bank account, or some private messages getting broken into any time soon. Unlike the computational power of these qubits, which can’t realistically grow exponentially due to the limitations stated above, just by adding a few more digits to the prime number private key (or even better, a few more digits to the exponential!), the factorisation difficulty also rises exponentially.

A second important application of quantum computers comes in the form of optimisation. Imagine you are presented with a maze puzzle – how would you try to solve it? One approach might be to always go left or always right, and when you hit a dead end, trace back and try to follow another path. Our classical computer solves the maze in the same way: it tries every path possible until it encounters the solution.

In fact, this is also how the route finding feature on Google Maps works, using a “fancier” type of search called Dijkstra’s algorithm. (We won’t go into the details of this algorithm here, but in short it takes one starting node A, and calculates the distance between that node and the other nodes it is connected to. Node A is then “removed” and the process is repeated for each of the other nodes until we have a shortest remaining path). With quantum computing, all of the possible routes (or distances) could be checked exponentially faster, and as such the optimal route found much more quickly.

All in all, I hope that through this short series of articles I’ve been able to convince you that whilst quantum computing is an exciting topic, it’s also very new and complex with many new things still be to invented and/or discovered. With many governments and organisations interested and investing in this field, it will surely bloom in the not-so-distant future.

[…] The Maths of a Cup of Tea, Hugh Simmons– Traffic Shock Waves, Ifan Rogers– Why don’t we have Quantum Computers already? Khanh Giang– Why all music is out of tune, Aidan […]

LikeLike

[…] 可以參考 Why can’t we use quantum computers? […]

LikeLike